Orin Kerr now lists four amicus briefs filed in the Andrew "weev" Auernheimer case. He is one of the attorneys representing him in his appeal pro bono. We have one of the amicus briefs done as text

here, the one by security researchers, and now let's look closely at a second amicus [PDF], this one filed by

Peiter "Mudge" Zatko, C. "Space Rogue" Thomas, Dan Hirsch, Gabriella Coleman, and other prestigious professional security researchers in support of Auernheimer. This one is particularly valuable, in that it carefully explains how a server acts on the World Wide Web and points the finger of blame at AT&T, pointing out that it had the choice to make the page private, but it failed to do so, leaving it open and public to all comers.

They even draw pictures, so there is no chance the court can miss the point of the tech lesson. And they

ask the court to overturn his conviction, because otherwise the implications of private, ex post facto law-setting by private corporations is terrible to contemplate and may be unconstitutional:

Mr. Auernheimer’s conviction on charges of violating the Computer Fraud and Abuse Act, 10 U.S.C. §1030, implies that his actions are in some material way different than those of any web user, and that beyond this, his actions violated a clearly-delineated line of authorization as required by §1030(a)(2)(C). Neither of these statements is true. The data Mr. Auernheimer helped to access was intentionally made available by AT&T to the entire Internet, and access occurred through standard protocols that are used by every Web user. Since any determination that the data was somehow nonpublic was made by a private corporation in secret, with no external signal or possibility of notice whatsoever, such a determination amounts to a private law of which no reasonable Internet user could have notice. On this basis alone, Mr. Auernheimer’s conviction must be overturned....

The United States asks this Court to endorse the use of the criminal justice system to cover up a private corporation’s failures. AT&T published private consumer data in an inappropriate fashion. Rather than take responsibility for their act, they have asked the criminal justice system to punish the researcher who uncovered their mistake. If this tactic is allowed to flourish, it will allow corporations to choose to terminate any safety oversight of their actions, and instead rely on the criminal process to serve as a cover-up for bad acts. Corporations will have no incentive to treat consumer data with adequate care in the future, since no one but the corporations themselves will be aware of any possible danger. In essence, the precedent that the respondent seeks to create is one that will make

the American taxpayer subsidize the irresponsibility and misfeasance of private corporations through the courts on a scale never before seen.

With this case, this Court has an opportunity to state that it is not acceptable for private corporations to warp the criminal justice system to shield themselves from public scrutiny in their digital public accommodations, any more than it is acceptable in any physical accommodation. Mr. Auernheimer’s “crime” was to discover that a public corporation was giving anyone access to private consumer information; he discovered this by, in essence, repeatedly adding 1 to a number. The Court should not condone the metaphorical shooting of a messenger who acted for the safety and security of all. We ask that this Court overturn Mr. Auernheimer’s conviction. The US Constitution forbids making a law after an act has been committed and thus sweeping into the arms of tha law someone who had no reason to believe the action was illegal at the time it was committed, because in fact it wasn't illegal at that time:It is a fundamental violation of the basic concept of due process for an act to be secretly criminal; AT&T’s determination that access to documents it had made public was unauthorized, without making such a determination public in any way, amounts to the creation of a private law.

Jump To Comments

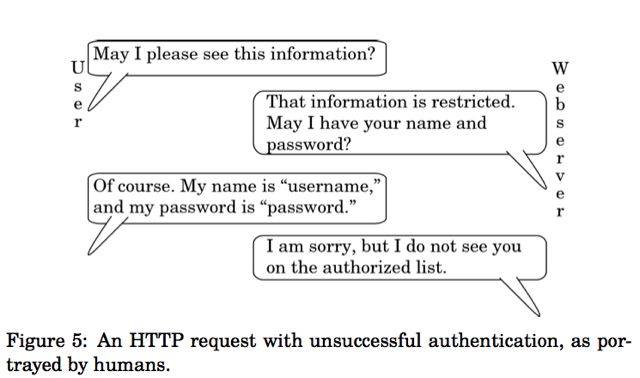

They use a standard analogy, comparing a web server to a librarian, pointing out that when you ask a librarian for a book, she can either hand you the book or ask for identification to see if you are authorized to have it. If not, she won't give it to you. All web servers are set up just like that, and AT&T failed to set up its web server to decline public requests. Instead it was open to all comers:

To use a standard analogy, a web server may be thought of as a librarian; rather than a user looking in the library stacks themselves,

they ask the librarian. If the document in question is public, the librarian gives it to the user without hesitation; if the document is private, the librarian asks the user for some proof of identity, such as a username and password. If the provided username and password are on the librarian’s roster for that document, the document is given to the user; if not, the librarian refuses to give the document to the user....

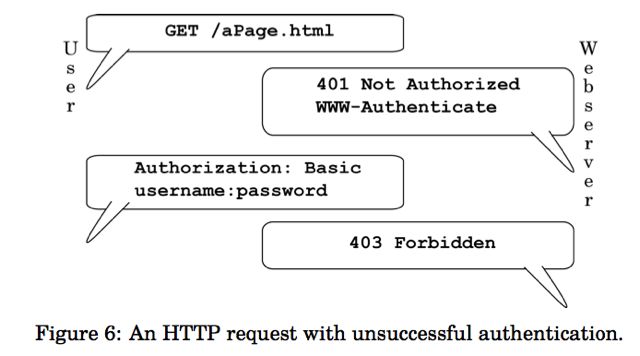

If the owner of a server wishes to restrict access to a particular document or set of documents, the owner can instruct the web server to do so.... The server’s HTTP request for identification is the code “401 Not Authorized;” this code tells the client device that some proof of identity is required. When the client receives the request, it will send an Authorization command, complete with username and password. (It may send the username and password in one of several encodings, but the command is otherwise the same.)... If the server recognizes the username and password, it will send 200 OK, along with the requested document—just as it did in Figure 2.

If our librarian does not accept the provided identification, he will refuse to provide the document.... If the server does not recognize the username and password, it will send code: “403 Forbidden.” This indicates that the username and password is not known to the server, or is not authorized to access the particular document.

AT&T could have set up its web server that way. At a minimum, it would have given the public, and Mr. Auernheimer, notice that this was not a public page. But by leaving it as a public page, he broke no law by accessing it in the normal way we all access web pages when we surf:

In Lambert, the Supreme Court set out a theory of actual notice of a law. In the case at hand, however, Mr. Auernheimer had not even the possibility of notice of the law in question; in effect, AT&T’s determination was secret, making it impossible for any legal scholar—let alone, any reasonable user of the Internet—to have knowledge of the legality of the action beforehand. AT&T did not use any means at its disposal, whether through the display of a warning, or through utilizing the fundamental protocol of the World Wide Web’s authorization mechanism, as described in Section 1.1, to give notice that access of the type Mr. Auernheimer aided was unauthorized. In fact, AT&T declared, at the time that the documents were accessed, that the access was authorized; instead of using the “403 Forbidden” code to signify a lack of authorization, as shown in Figure 6, it instead used the “200 OK” code that signifies that no further authorization is needed, as shown in Figure 2. This means that Mr. Auernheimer cannot be held legally responsible for exceeding his “authorized access,” since he had no way of finding out what access was “unauthorized,” and was indeed told by AT&T’s computers that his access was authorized. Does it matter that he asked for page after page? No. You can legally read every article on Groklaw, one by one, if you want to, and it's perfectly legal and normal: It is crucial to realize that AT&T gave the webserver its instructions: they explicitly told it to respond with consumers’ private information to anyone who gave the server a valid number, easily picked at random. With this action, AT&T deliberately made the information public to anyone who asked, set no limits whatsoever on who could ask or how often, and required no verification before handing out ostensibly private information to all comers. AT&T used technical means to signal to any user of the Internet that this data was public, not private, and treated the data accordingly.

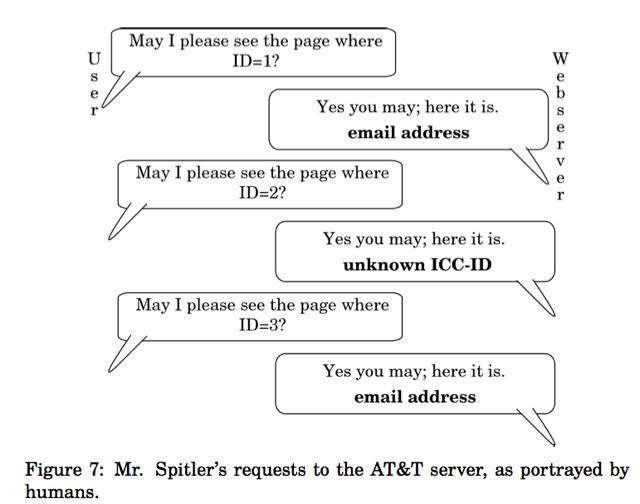

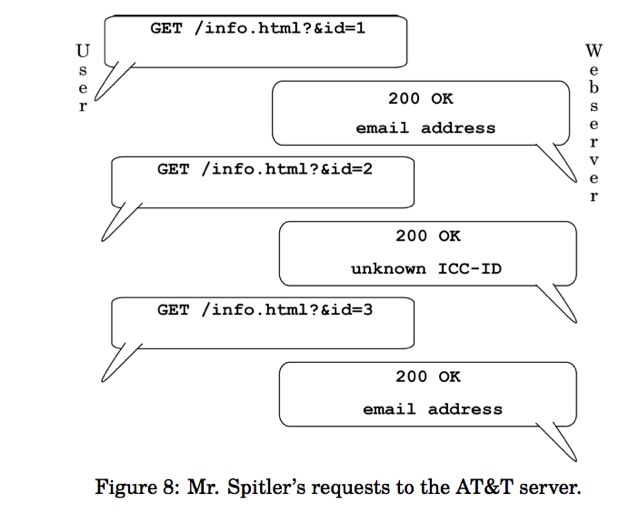

While the repeated requests outlined in Figure 8 might look odd to a lay person, they in fact represent the standard operation of the Web. A

user makes these same requests whenever they use a website, whether they are clicking through cat videos, using a search engine, or reading a blog. Indeed, if a person wished to read every post on a blog in order, they might first ask for http://www.example.com/blog/1, signifying the first post; having read that, they would ask for http://www.example.com/blog/2, the second post; http://www.example.com/blog/3, the third post; and so on. (This might be done through clicking on links, or it might be done by manually changing the address displayed to the user.) There is nothing untoward about this behavior; it is the default behavior for most of the

Web, and these requests are set out and sent in the same way, regardless of what device sends them, or whether a user is present.

For that matter, if you see the articles on a blog are numbered 1, 2, 3 and you see the date the blog began and know the current date, and you do the math and figure out that today's blog will be a certain number and you are right, did you break the law? Do you see now why Auernheimer said, as he was about to be led away to jail, that he was going to jail for doing math? It's not in the public interest to allow private companies to hide defects in their products. Is it against the law to tell the media if you find a dangerous defect in a car you bought? If this case stands, it would mean if Ralph Nader found a defect today in a car, he would break the law to tell the media, since cars today have computers in them. Auernheimer found a security flaw on AT&T's web server, and he told the media. Consumer Reports does that kind of reporting all the time. It helps to protect the public:

There are relatively few sources of pressure to fix design defects, whether they be in wiring, websites, or cars. The government is not set up to test every possible product or website for defects before its release, nor should it be; in addition, those defects in electronic systems that might be uncovered by the government (for instance, during an unrelated investigation) are often not released, due to internal policies. Findings by industry groups are often kept quiet, under the assumption that such defects will never come to light—just as in Grimshaw (the Ford Pinto case). The part of society that consistently serves the public interest by finding and publicizing defects that will harm consumers is the external consumer safety research community, whether those defects be in consumer products or consumer websites. In the situation at hand, AT&T was improperly safeguarding the personal information of hundreds of thousands of consumers. When Mr. Auernheimer discovered this fact, he publicized it, in precisely the same way that Consumers Union, publisher of Consumer Reports, does with each consumer-safety violation that it uncovers:

he made it available to the press.

The United States would like this Court to create a rule that would

allow private corporations to control the right of researchers to examine public accommodations, as long as those accommodations were on the Internet. If that rule were applied to the physical world, it would be unlawful for a worker to report a building code violation on a construction site. It would be unlawful for an environmental activist to sample water for contaminants, lest they come across things a company (which had illegally dumped waste in a river) deemed secret. It would be unlawful for a customer at a restaurant to report a rat running across his or her table to the city health authority. It would be unlawful for a newspaper to investigate whether a business is illegally discriminating against racial minorities. It would even be illegal for Ralph Nader to have published (or have done the research for) “Unsafe at Any Speed;” his analysis of design flaws inherent to certain automobiles, found while examining the design of those automobiles without permission from their manufacturers, would be illegal under a system that required that a company give its consent to any research of which it might not approve.

In fact, every car sold today contains a great deal of computers that control every facet of the car’s operation—from entertainment systems, to how the engine runs, and even braking. The CFAA interpretation proposed by the government could be used to criminalize Mr. Nader’s research; if such research were done today, analysis of the car would necessarily involve its computers. General Motors should not have been allowed, after the fact, to decide that it had not meant to allow Mr. Nader access to the Chevrolet Corvair when Mr. Nader, rather than simply driving the car (with its inherent risks) as they had intended, instead used the car to discover its design risks and flaws and to make that information available to the public. In short, private corporations would, under the rule proposed by the United States, be able to silence critics with the threat of imprisonment for publishing unfavorable research.

Here's why the group say they cared enough to file an amicus brief:

Interest of Amici Curiae

The amici, Meredith Patterson, Brendan O’Connor, Professor Sergey Bratus, Professor Gabriella Coleman, Peyton Engel, Professor Matthew Green, Dan Hirsch, Dan Kaminsky, Professor Samuel Liles, Shane MacDougall, Brian Martin, C. “Space Rogue” Thomas, and Peiter “Mudge” Zatko, are professional security researchers, working in government, academia, and private industry; as a part of their profession, they find security problems affecting the personal information of millions of people and work with the affected parties to minimize harm and learn from the mistakes that created the problem. Members of the amici have testified before Congress, been qualified as experts in federal court, and instructed the military on computer security; they have been given awards for their service to the Department of Defense, assisted the Department of Homeland Security, and spoken at hundreds of conferences. Their résumés, representing more than two centuries of combined experience, are attached as an Appendix for the information of the Court. They are concerned that the rule established by the lower court, if not corrected, will prevent all legitimate security researchers from doing their jobs; namely, protecting the populace from harm. Accordingly, they wish to aid this Court in

understanding the implications of this case in the larger context of modern technology and research techniques. Legally, they challenge the right of corporations to write laws on an ad-hoc basis:

The Computer Fraud and Abuse Act, 10 U.S.C. §1030, as construed by the United States in this case, allows a private corporation to make, and the government to enforce, a secret, after-the-fact declaration that access to information made public through standard technological processes was “unauthorized;” this determination, when used to trigger criminal liability, is a private law, which violates the United States Constitution. Though this determination was a non-governmental action, it may also constitute a violation of the Ex Post Facto Clause through its binding legal effect, as adopted by the United States. Allowing unknowable laws to be written on an ad-hoc basis by private corporations is not only uncon- stitutional, but also harms the public at large by preventing consumer-protecting functions in the digital world. The Court should overturn the judgment of conviction in this case.

One of the group, Brendan O'Connor, a geek who is now a law student, gives us the back story on how the group got together and decided to do this, and he gives a geek version of the list:

Meredith Patterson - she of LangSec fame, among many other areas of fame

Brendan O’Connor - your humble scrivener

Professor Sergey Bratus - Patron Saint of the Gospel of the Weird Machines

Professor Gabriella Coleman - Hacker historian and scholar writ large

Peyton Engel - Hacker, pen tester, Real Lawyer, and generally neat guy

Professor Matthew Green - Cryptographer, great professor, gets interviewed a lot about all his research

Dan Hirsch - Another LangSec researcher, analyst, and cool dude

Dan Kaminsky - Do I really need to introduce Dan Kaminsky?

Professor Samuel Liles - a professor doing research on “cyber warfare”

Shane MacDougall - 2x Defcon Black Badge winner, social engineer extraordinaire

Brian Martin - The man behind the legend behind the squirrel

C. “Space Rogue” Thomas - Founding member of The L0pht

Peiter “Mudge” Zatko - Another founder of The L0pht, but also a now-former DARPA Program Manager, responsible for Cyber Fast Track

To make sure the court knew how cool these people were, we made an Appendix. Ordinarily, the appendix on these sorts of briefs is reserved for documents from the record, affidavits, etc. Instead, we attached the CVs of everyone in the group—a staggering 61 pages worth, in the end.

The Appendix with the collection of professional CVs begins on page 29 of the PDF and goes through page 89, the end of the PDF. It's a very impressive group -- E. Gabriella Coleman, for example, requires over 20 pages to list all her honors, papers, conference keynotes and plenary talks, books and lectures. It would be hard to find a group better qualified to do it. My favorite detail from the CVs, Dan Hirsch's, who spent a year at Google, and who lists his assignment there like this: Site Reliability Engineer, Google, Inc., Mountain View, CA.

Worked with a team of eight people to run a low-latency, highly available

planet-scale storage system for petabytes of data. Debugged production

issues including fiber cuts, misbehaving clients, heavy resource contention,

and hardware failure. Participated in weekly on-call rotation, with an SLA

requiring a five-minute response time. Helped internal clients get started

using our service. Led project to automate deployment of new versions of

our service across dozens of clusters; reduced SRE time spent on weekly

pushes by 90%. Supported Cellbots project in 20% time. Assisted with

design of the “IOIO” I/O board for Android. "Planet-scale storage system." Love that. Remember the guy that found the critical flaw in DNS? That's Dan Kaminsky. And he led the group that fixed it. But he did that after more than a decade as a noted security researcher, and he's advised companies like Cisco, Avaya, and Microsoft. His bio is on page 45 of the Appendix. Mudge. Everyone knows Mudge. He was with DARPA. Now he's with Google. And Space Rogue also created Hacker News Network, among other projects.

Here's the brief, from the argument section onward, as text, leaving off the header and the Appendix listing the credentials of each of the signatories, which you can read from the PDF beginning on page 29 and going through page 89:

****************************

Argument

1. ALLOWING A CORPORATION TO SERVE DATA PUBLICLY, THEN STATE AFTER

THE FACT THAT ACCESS WAS SECRETLY RESTRICTED AND THUS IMPOSE CRIM-

INAL LIABILITY, AMOUNTS TO A PRIVATE CRIMINAL LAW, AND MAY ALSO VI-

OLATE THE EX POST FACTO CLAUSE

Mr. Auernheimer’s conviction on charges of violating the Computer Fraud and Abuse Act, 10 U.S.C. §1030, implies that his actions are in some material way different than those of any web user, and that beyond this, his actions violated a clearly-delineated line of authorization as required by §1030(a)(2)(C). Neither of these statements is true. The data Mr. Auernheimer helped to access was intentionally made available by AT&T to the entire Internet, and access occurred through standard protocols that are used by every Web user. Since any determination that the data was somehow nonpublic was made by a private corporation in secret, with no external signal or possibility of notice whatsoever, such a determination amounts to a private law of which no reasonable Internet user could have notice. On this basis alone, Mr. Auernheimer’s conviction must be overturned. In addition, since AT&T’s

after-the-fact determination created a binding legal effect upon Mr. Auernheimer, it may violate the Ex Post Facto Clause of the United States Constitution, which would provide a separate legal basis upon which Mr. Auernheimer’s conviction must be overturned.

3

1.1 How a World Wide Web server works

The HyperText Transfer Protocol, or HTTP, is the language that both web clients, such as web browsers, and web servers, which host all content on the World Wide Web, speak. Internet protocols are defined by a standards body called the Internet Engineering Task Force (IETF), through documents called Requests For Comments, or RFCs; HTTP, as it is used today, was defined in RFC 2616.1 This document, promulgated by the Internet Engineering Task Force in 1999,2 specifies the required behavior of web servers and web clients of all types on the Internet. Much as speaking a common human language allows two individuals to communicate by speech, HTTP allows two computers, whether they be desktops, laptops, servers, tablets, phones, or other devices, to be part of the World Wide Web—and it applies whether a human is using a web browser, or two computers with no user input devices are exchanging web data.

HTTP is so popular—it is used by more than a billion people, and several billion devices, each day—because it is so simple. Web servers host all content on the web, whether that content is designated to be public or private. To use a standard analogy, a web server may be thought of as a librarian; rather than a user looking in the library stacks themselves,

4

they ask the librarian. If the document in question is public, the librarian gives it to the user without hesitation; if the document is private, the librarian asks the user for some proof of identity, such as a username and password. If the provided username and password are on the librarian’s roster for that document, the document is given to the user; if not, the librarian refuses to give the document to the user.

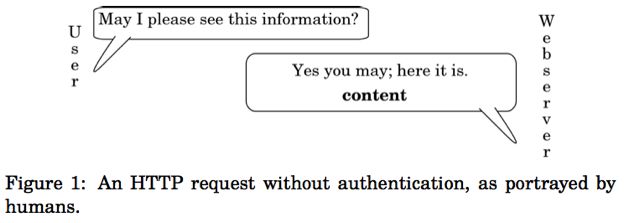

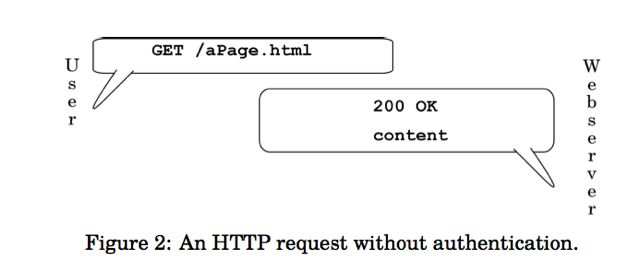

For a public document, a human might interact with the librarian as in Figure 1. Note the brief conversation: the human requests the document, and the librarian provides it immediately and without any check for credentials. The HTTP conversation, just as it is sent over the wire, is in Figure 2; the “200 OK” is the standard HTTP response that indicates that the document is being sent, as specified in the protocol document discussed above. In Figure 2, the client first sends a GET request for a specified page. If the server has been told by its owner that the page requested is available to the public, it will respond by sending 200 OK, indicating a successful request, along with the content (a page, photo, document, or file).

5

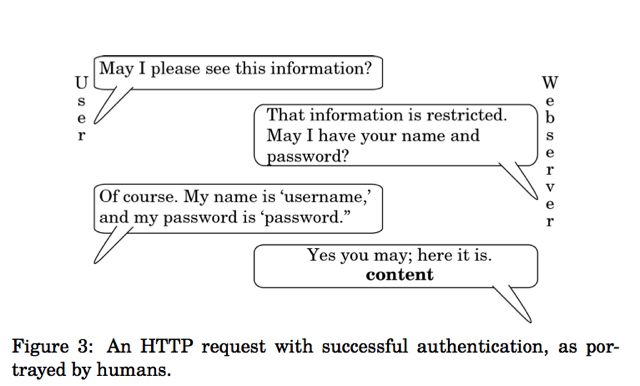

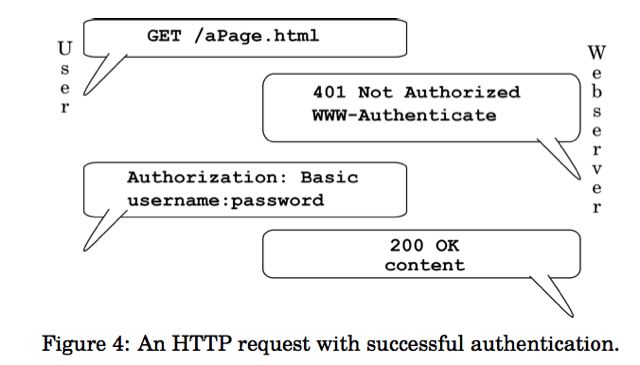

If the owner of a server wishes to restrict access to a particular document or set of documents, the owner can instruct the web server to do so. The librarian will ask the human for a proof of identity, and provide the document only if the identity is on the list, as in Figure 3. The server’s HTTP request for identification is the code “401 Not Authorized;” this code tells the client device that some proof of identity is required. When the client receives the request, it will send an Authorization command, complete with username and password. (It may send the username and password in one of several encodings, but the command is otherwise the same.) The HTTP version of this request is shown in Figure 4. If the server recognizes the username and password, it will send 200 OK, along with the requested document—just as it did in Figure 2.

If our librarian does not accept the provided identification, he will refuse to provide the document, as shown in Figure 5. If the server does not recognize the username and password, it will send code: “403 Forbidden.” This indicates that the username and password is not known to the server, or is not authorized to access the particular document. This flow is shown

6

in Figure 6; note that no content is sent by the server in that case.

AT&T instructed its servers that the email addresses it had were unrestricted content, available to anyone; in essence, they were the content for a particular set of documents. The documents were named for their asso-

7

ciated numeric identifiers, called ICC-IDs.3 If our librarian is asked for

8

several documents in a row, each by its identifier, there is no issue; it responds to each in turn. If it cannot find a document that corresponds to an identifier, it simply responds that while it has verified that the document number is valid, it has no information to give on that document.4 This is shown in Figure 7. Mr. Spitler simply requested many documents; for each, AT&T’s server returned a “200 OK,” with either the email address of a subscriber or a notice that it was not known, as shown in Figure 8.

It is crucial to realize that AT&T gave the webserver its instructions: they explicitly told it to respond with consumers’ private information to anyone who gave the server a valid number, easily picked at random. With this action, AT&T deliberately made the information public to anyone who asked, set no limits whatsoever on who could ask or how often, and required no verification before handing out ostensibly private information to all comers. AT&T used technical means to signal to any user of the Internet that this data was public, not private, and treated the data accordingly.

While the repeated requests outlined in Figure 8 might look odd to a lay person, they in fact represent the standard operation of the Web. A

9

user makes these same requests whenever they use a website, whether they are clicking through cat videos, using a search engine, or reading a blog. Indeed, if a person wished to read every post on a blog in order, they might first ask for http://www.example.com/blog/1, signifying the first post; having read that, they would ask for http://www.example.com/blog/2, the second post; http://www.example.com/blog/3, the third post; and so on. (This might be done through clicking on links, or it might be done by manually changing the address displayed to the user.) There is nothing untoward about this behavior; it is the default behavior for most of the

10

Web, and these requests are set out and sent in the same way, regardless of what device sends them, or whether a user is present.

1.2 AT&T’s secret determination of “authorized access” creates an unconstitutional

private law

It is a fundamental violation of the basic concept of due process for an act to be secretly criminal; AT&T’s determination that access to documents it had made public was unauthorized, without making such a determination public in any way, amounts to the creation of a private law.

“The rule that ‘ignorance of the law will not excuse’ is deep in our law, as is the principle that of all the powers of local government,

11

the police power is ‘one of the least limitable.’ On the other hand, due process places some limits on its exercise. Engrained in our concept of due process is the requirement of notice.” Lambert v. People of the State of California, 355 U.S. 225, 228, 78 S.Ct. 240 (1957) (citations omitted).

In Lambert, the Supreme Court set out a theory of actual notice of a law. In the case at hand, however, Mr. Auernheimer had not even the possibility of notice of the law in question; in effect, AT&T’s determination was secret, making it impossible for any legal scholar—let alone, any reasonable user of the Internet—to have knowledge of the legality of the action beforehand. AT&T did not use any means at its disposal, whether through the display of a warning, or through utilizing the fundamental protocol of the World Wide Web’s authorization mechanism, as described in Section 1.1, to give notice that access of the type Mr. Auernheimer aided was unauthorized. In fact, AT&T declared, at the time that the documents were accessed, that the access was authorized; instead of using the “403 Forbidden” code to signify a lack of authorization, as shown in Figure 6, it instead used the “200 OK” code that signifies that no further authorization is needed, as shown in Figure 2. This means that Mr. Auernheimer cannot be held legally responsible for exceeding his “authorized access,” since he had no way of finding out what access was “unauthorized,” and was indeed told by AT&T’s computers that his access was authorized.

Moreover, to affirm the district court would provide for the enforcement of such private laws, and the handling of litigation about them, at public expense. The criminal justice system is ill-suited to serve as a tool

12

for corporate policy interests. If a business fails to take care to avoid unwanted consequences associated with its publication of information, it cannot later be allowed to convert those consequences into felonies for the criminal justice system to handle; to permit that path would allow businesses to shirk their duty to safeguard private information, and simply pass the workload onto an on already overburdened court system.

The Ninth Circuit has already rejected a system of private laws, even with notice, being used to turn a user’s access to data into a felonious act under the CFAA. In U.S. v. Nosal, 676 F.3d 854 (9th Cir. 2012), the Ninth Circuit ruled that it would not allow “every violation of a private computer use policy [to be] a federal crime.” Id. at 859.

“[T]he government’s proposed interpretation of the CFAA allows private parties to manipulate their computer-use and personnel policies so as to turn these relationships into ones policed by the criminal law.” Id. at 860.

We invite this Court to adopt the Ninth Circuit’s determination that this sort of private legal scheme is unacceptable in a society of laws.

1.3 AT&T’s post-hoc determination of “authorized access” violates the Ex Post Facto

Clause

The Computer Fraud and Abuse Act criminalizes the act of exceeding authorized access to a computer, and defines authorization solely as what the user is “entitled” to access. 18 U.S.C. §1030(e)(6). As explained in Section 1.1, AT&T used technical means to make public the private in-

13

formation of its customers, as a response to anyone who asked for it; a reasonable user of the World Wide Web would assume that data explicitly made public would be public. However, after the data was accessed, AT&T decided that this information that it had made public should, in retrospect, have been private, and thus no user should have access to it (despite the fact that it was being handed out by their server, at their behest). This determination, insofar as it is adopted by the United States, and used as the threshold for criminal liability, makes an action criminal that was non-criminal when it was taken; as such, it amounts to a violation of the Ex Post Facto Clause of the United States Constitution.

Ex post facto laws are prohibited by Article 1, Section 9 of the United States Constitution; this means that something cannot be made illegal, and a person thereby be held liable, for an action taken before the illegality had been designated. Ex post facto laws have been broadly defined:

“1st. Every law that makes an action done before the passing of the law, and which was innocent when done, criminal; and punishes such action. 2d. Every law that aggravates a crime, or makes it greater than it was, when committed. 3d. Every law that changes the punishment, and inflicts a greater punishment, than the law annexed to the crime, when committed. 4th. Every law that alters the legal rules of evidence, and receives less, or different, testimony, than the law required at the time of the commission of the offence, in order to convict the offender.” Calder v. Bull, 3 Dall. 386, 390, 1 L.Ed. 648 (1798) (emphasis deleted).

Particularly relevant here, an ex post facto law need not be a complete legislative action for it to violate the Ex Post Facto Clause (i.e., it need not

14

be an actual law). Instead, it need only have “binding legal effect,” as the Supreme Court restated in Peugh v. U.S., 569 U.S. ___, at *12, 133 S.Ct. 2072 (2013).

In Peugh, the Supreme Court held that even non-binding sentencing guidelines—guidelines that did not carry the force of law, and were not created through direct Congressional action—could violate the Ex Post Facto Clause. Here, the imposition of liability is actually more concerning than the stricken sentence in Peugh: AT&T, a non-governmental, non-neutral corporation, created the rule Mr. Auernheimer is charged with violating, after the time at which it is claimed that he violated it. While Peugh dealt with sentencing guidelines, the amici believe that the rule put forward need not be restricted to strictly governmental action; this non-governmental action created the requisite “binding legal effect,” and, crucially, the determination was subsequently adopted by the government when it chose to indict Mr. Auernheimer. In this case, AT&T made an after-the-fact determination that an action, already taken, had violated some previously-unarticulated rule; this determination meant that an action that in other respects was simply a request to view a web page, as explained in Section 1.1, was now criminal. This determination, and its subsequent adoption by the United States, has had the requisite “binding legal effect” upon Mr. Auernheimer in the form of the case at bar.

In Peugh, the Supreme Court held that even intervening discretionary acts could not save a sentencing guideline: “[O]ur cases make clear that

15

‘[t]he presence of discretion does not displace the protections of the Ex Post Facto Clause.’” Peugh, 569 U.S. at *12 (citation omitted). In this case, AT&T issued much more than a mere guideline—it, through the government’s adoption of its determination, issued the new rule of law itself, after the act had been committed. Peugh holds that no discretion between then and now, such as the issuance of an indictment, prevents the situation from violating the Ex Post Facto Clause. As such, Mr. Auernheimer may not be held criminally liable for violating a law not fully actualized until after his act.

2. CRIMINALIZING ACCESS TO PUBLICLY-OFFERED MATERIAL IS NOT IN THE

PUBLIC INTEREST, BECAUSE IT PREVENTS THE SECURITY RESEARCH COM-

MUNITY FROM EXERCISING ITS CONSUMER-PROTECTING ROLE.

Mr. Auernheimer is one example of a security researcher, a profession of which the amici are also a part. There are many thousands of security researchers worldwide5 who work in government, academia, and for private industry. They have a shared goal: to protect people from dangerous computer systems, whether they be websites that leak personal information, implantable cardioverter-defibrillators that are susceptible to remote disabling, or dams that can be controlled—or even destroyed—

16

from anywhere in the world.6 The security research community is a part of the larger consumer safety community: as with more traditional product safety researchers, security researchers form an important part of the system of consumer protection.

The role that consumer safety and advocacy organizations and researchers play in ensuring the safety of the population has been recognized throughout the legal system. See, e.g., The Consumer Product Safety Act, 15 U.S.C. §2051, et seq. (2006) (providing protection for those who “blow the whistle” on violations of consumer safety), National Ass’n Of State Utility Consumer Advocates v. F.C.C., 457 F.3d 1238 (11th Cir. 2006) (cellular telephone service billing irregularities), International Healthcare Management v. Hawaii Coalition For Health, 332 F.3d 600 (9th Cir. 2003) (medical care pricing), Kaiser Aluminum & Chemical Corp. v. U. S. Consumer Product Safety Commission, 574 F.2d 178 (3d Cir. 1978) (aluminum house wiring), Grimshaw v. Ford Motor Co., 191 CA3d 757, 236 Cal. Rptr. 509 (Cal. Ct. App. 1981) (the Ford Pinto case), In re Harvard Pilgrim Health Care, Inc., 434 Mass. 51, 746 N.E.2d 513 (Mass. 2001) (medical care organizations), Porter v. South Carolina Public Service Com’n, 333 S.C. 12, 507 S.E.2d 328 (S.C. 1998) (BellSouth statewide rate-setting corruption), Utah State Coalition of Sr. Citizens v. Utah Power and Light Co., 776 P.2d 632 (Utah 1989) (protecting the elderly from a state power monopoly). These organizations do not ask for, nor do they require, the

17

permission of the companies whose publicly-accessible products they test for problems that endanger the safety of society at large, and every member of society specifically. If there were such a requirement, the permission would in reality never be given; as in the case at hand, the existence of the problem in the first place, combined with either a lack of awareness on the part of the responsible company, or the company’s unwillingness to fix the problem, would be embarassing to the company.

There are relatively few sources of pressure to fix design defects, whether they be in wiring, websites, or cars. The government is not set up to test every possible product or website for defects before its release, nor should it be; in addition, those defects in electronic systems that might be uncovered by the government (for instance, during an unrelated investigation) are often not released, due to internal policies. Findings by industry groups are often kept quiet, under the assumption that such defects will never come to light—just as in Grimshaw (the Ford Pinto case). The part of society that consistently serves the public interest by finding and publicizing defects that will harm consumers is the external consumer safety research community, whether those defects be in consumer products or consumer websites. In the situation at hand, AT&T was improperly safeguarding the personal information of hundreds of thousands of consumers. When Mr. Auernheimer discovered this fact, he publicized it, in precisely the same way that Consumers Union, publisher of Consumer Reports, does with each consumer-safety violation that it uncovers:

18

he made it available to the press.

The United States would like this Court to create a rule that would

allow private corporations to control the right of researchers to examine public accommodations, as long as those accommodations were on the Internet. If that rule were applied to the physical world, it would be unlawful for a worker to report a building code violation on a construction site. It would be unlawful for an environmental activist to sample water for contaminants, lest they come across things a company (which had illegally dumped waste in a river) deemed secret. It would be unlawful for a customer at a restaurant to report a rat running across his or her table to the city health authority. It would be unlawful for a newspaper to investigate whether a business is illegally discriminating against racial minorities. It would even be illegal for Ralph Nader to have published (or have done the research for) “Unsafe at Any Speed;” his analysis of design flaws inherent to certain automobiles, found while examining the design of those automobiles without permission from their manufacturers, would be illegal under a system that required that a company give its consent to any research of which it might not approve.

In fact, every car sold today contains a great deal of computers that control every facet of the car’s operation—from entertainment systems, to how the engine runs, and even braking. The CFAA interpretation proposed by the government could be used to criminalize Mr. Nader’s research; if such research were done today, analysis of the car would nec-

19

essarily involve its computers. General Motors should not have been allowed, after the fact, to decide that it had not meant to allow Mr. Nader access to the Chevrolet Corvair when Mr. Nader, rather than simply driving the car (with its inherent risks) as they had intended, instead used the car to discover its design risks and flaws and to make that information available to the public. In short, private corporations would, under the rule proposed by the United States, be able to silence critics with the threat of imprisonment for publishing unfavorable research.

In addition, the government, private corporations, and indeed the whole world have benefited from the research being done by independent security researchers. One flaw, discovered by one of the amici, Dan Kaminsky, affected the core naming infrastructure of the Internet; his publication of the vulnerability to the affected entities—private corporations, governments, and open source projects—resulted in the aversion of a catastrophe; in essence, the flaw would have meant the complete destruction of the Internet. Members of the amici have reported vulnerabilities in software created by Microsoft, VeriSign, McAfee, and many others of the world’s largest corporations, whose software is used by millions of people every day. These researchers have prevented untold financial and privacy losses to entities large and small; it is therefore crucial that their continued right to research be protected.

There is no reason why the electronic world and the physical world should differ so mightily: as an increasing amount of an average per-

20

son’s day-to-day life moves online (from paying bills to registering cars, and from shopping, to trading stocks, to musical performance and art appreciation), any conceivable rationale for this difference becomes facially unconvincing. The increased prominence of the digital world means that more scrutiny, not less, should apply to those who offer up public accommodations on the Internet (or in any digital forum). If, as Justice Brandeis once said, sunlight is to be a disinfectant, then it is imperative that if a public corporation chooses to endanger the safety of consumer information, we allow security researchers to publish their results and warn the public at large.

CONCLUSION

The United States asks this Court to endorse the use of the criminal justice system to cover up a private corporation’s failures. AT&T published private consumer data in an inappropriate fashion. Rather than take responsibility for their act, they have asked the criminal justice system to punish the researcher who uncovered their mistake. If this tactic is allowed to flourish, it will allow corporations to choose to terminate any safety oversight of their actions, and instead rely on the criminal process to serve as a cover-up for bad acts. Corporations will have no incentive to treat consumer data with adequate care in the future, since no one but the corporations themselves will be aware of any possible danger. In essence, the precedent that the respondent seeks to create is one that will make

21

the American taxpayer subsidize the irresponsibility and misfeasance of private corporations through the courts on a scale never before seen.

With this case, this Court has an opportunity to state that it is not acceptable for private corporations to warp the criminal justice system to shield themselves from public scrutiny in their digital public accommodations, any more than it is acceptable in any physical accommodation. Mr. Auernheimer’s “crime” was to discover that a public corporation was giving anyone access to private consumer information; he discovered this by, in essence, repeatedly adding 1 to a number. The Court should not condone the metaphorical shooting of a messenger who acted for the safety and security of all. We ask that this Court overturn Mr. Auernheimer’s conviction.

Respectfully submitted,

/s/ Alexander Muentz

Alexander Charles Muentz

Attorney for Amici

_________

1 Network Working Group, RFC 2616 - Hypertext Transfer Protocol – HTTP/1.1, Internet Engineering Task Force (June 1999), http://tools.ietf.org/

html/rfc2616.

2 Previous versions of HTTP have been standardized before 1999 by the IETF, but RFC 2616 is the most recent version of HTTP.

3 A Subscriber Identity Module, or “SIM Card,” is a small replaceable chip inside mobile phones or other mobile devices that use the Global Sys- tem for Mobile Communications (GSM) protocol; in the United States, AT&T and T-Mobile use GSM. An Integrated Circuit Card Identifier, or ICC-ID, is a number up to twenty digits in length that identifies a SIM card. It is important to note that an ICC-ID is not an authenticating number; it could be compared to a football player’s jersey number, in that it was not designed to be used for anything more than the most transient identification.

4 This is because AT&T’s server would respond the same way to each request, regardless of whether it knew a particular ICC-ID; if it did not know an ID, it would simply fail to provide an email address in that otherwise-blank space. A server could also be configured to give the response “404 Not Found,” if it encountered an ID of which it had not heard.

5 One security research conference, the annual DEF CON conference in Las Vegas, NV, had more than 13,000 attendees in 2012.

6 Talks on each of these were presented at DEF CON in 2012, among many other presentations.

22

|